Catch stronger signals.

Ignore weak ones.

Review your code across multiple LLM providers and keep only the findings that hold up — with confidence scoring and provider disagreement detection.

Built as a second validation layer in your review pipeline, before release or handoff.

No AI magic claims. Just cross-checked signals.

When AI writes most of your code

When AI generates large parts of your code, review speed increases — and so does uncertainty. NexaVerify adds a cross-check before that uncertainty reaches production.

Before client handoff or release

Add a validation pass when the stakes are higher. Review confidence scores before delivery.

Freelancers and consultants

Justify your audit fees with a professional-grade multi-LLM consensus report.

Best used before client delivery

Best used before production release

Best used after heavy AI-generated code

Best used on auth, shell, or runtime-sensitive logic

Single-model review

One LLM returns findings. Some are real. Some are hallucinations. You can't tell which is which — so you either trust everything (waste time on false positives) or ignore everything (miss real bugs). Single-model reviews can hallucinate or miss issues.

Multi-provider consensus

Multiple LLMs analyze the same code independently. NexaVerify compares their outputs — issues confirmed across providers get higher confidence. Isolated findings get lower confidence. Disagreements are surfaced, not hidden.

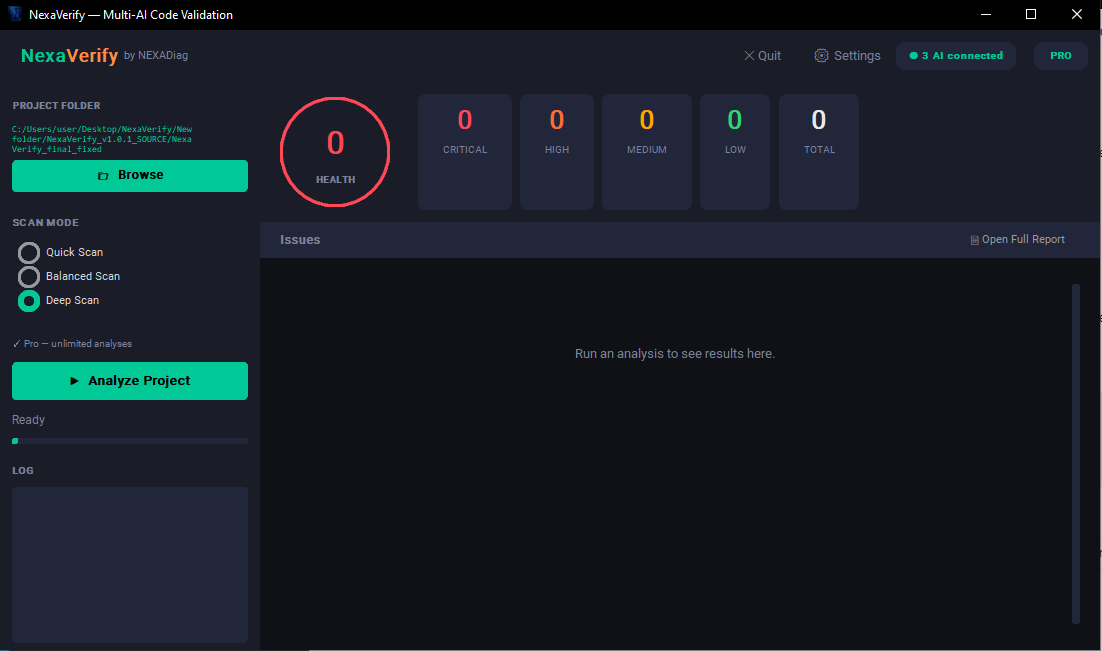

Quick

Fastest sanity pass. Small checks, quick signal.

Balanced ★

Best default. Good tradeoff between speed, signal quality, and cost.

Deep

Before delivery, client audits, high-risk code.

Select project. NexaVerify builds local view: files, chunks, stack, hotspots.

Providers return findings. Consensus engine filters weak or isolated signals.

HTML for people. JSON for automation, archiving, diffing between runs.

Validated issue list

Findings ranked by severity, weighted by how many providers agreed.

Confidence per finding

Single-provider detection: lower confidence. Multi-provider agreement: higher confidence.

Disagreement flags

When providers disagree on severity, it's surfaced instead of hidden.

Dual output

HTML report for human review. JSON export for automation and diffing.

Provider health

See which providers succeeded, which failed, and why — in every report.

Prompt presets

Targeted review angles: bugs, security, performance, QA.

1. Get a free API key

Gemini's free tier signs up in 30 seconds at aistudio.google.com/apikey — no phone needed. Or use Groq, OpenAI, Claude — any provider you already have.

2. Paste in NexaVerify

Open the app, paste your key in Settings. Done. Key stays on your machine, never sent to NEXADiag.

3. Scan a folder

Browse to your project, click Analyze. HTML report opens in your browser. That's it.

Self-test run · Balanced mode · 2/3 providers returned results · GPT failed with 429 quota error.

View full sample report → Show full breakdown ▼| File | Type | Severity | Confirmed by | Confidence |

|---|---|---|---|---|

| shell_risk.py:5 | security | HIGH | gemini ×1.5 | 65% |

| auth_bug.py | bug | MEDIUM | ollama ×0.9 | 50% |

| auth_bug.py:3 | bug | MEDIUM | gemini ×0.9 | 50% |

| runtime_bug.py:2 | bug | MEDIUM | gemini ×0.9 | 50% |

| runtime_bug.py:5 | bug | MEDIUM | gemini ×0.9 | 50% |

ollama: HIGH · gemini: CRITICAL — Severity spread: 1 level.

When a provider fails, the report is still generated with whatever data is available.

✔ Available today

Multi-provider consensus with confidence scoring. Quick / Balanced / Deep modes. HTML + JSON reports. Disagreement detection. Local-first with your API keys.

🚧 Currently improving

Smarter consensus weighting. Better grouping of related issues. Improved performance on large codebases. Scan history and run-to-run comparison.

🤝 Built with users, not for them

Early users directly influence priorities. Not a closed SaaS — a tool that grows with real workflows. Buyers today shape what ships next.

No review yet. Be the first to try it and tell me what works — and what doesn't.

Honest feedback > fake stars. Drop me a note via the email or links below.

Questions, feedback, bug reports, ideas — all welcome at nexadiag@gmail.com. Every email gets a real reply, usually within 48h.